A lot of our clients can’t send their data to a third-party API. Healthcare, finance, legal. Others can in principle, but don’t want a metered per-token bill on millions of internal documents, or want to fine-tune a model on their own data without it leaving their environment. So the cloud-or-local question keeps coming up. We wanted to see where the gap actually is right now, so we set up a benchmark and started running tasks through it. This is the first one.

The setup

We’re testing on a single NVIDIA DGX Spark in our office. It’s a new piece of hardware from NVIDIA built specifically for running LLMs locally, and we wanted to see what it could do. Three open-source models running on it:

Six frontier cloud models from public APIs: Claude Opus 4.7, GPT-5, GPT-5.4, Gemini 2.5 Pro, Gemini 3 Pro Preview, and Gemini 3.1 Pro Preview.

Same prompts, same expected outputs, same scoring rubric. Pass or fail per question, then total.

The task

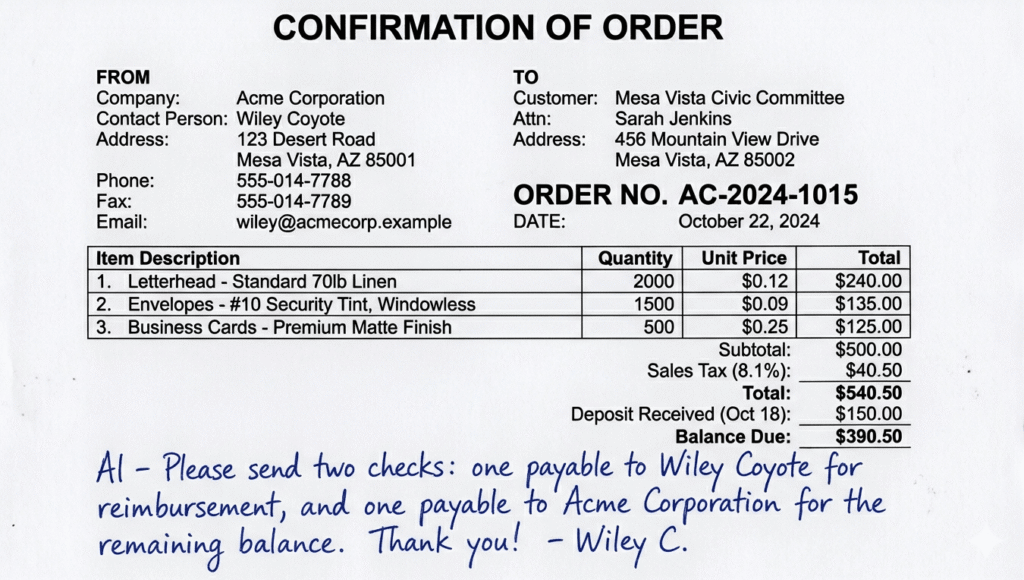

For our first run we picked something close to a real client workflow: pulling structured data out of a scanned business document. One document, 32 questions. Vendor name, totals, addresses, dates, payment terms, line items, plus a few harder ones about handwriting in the margins.

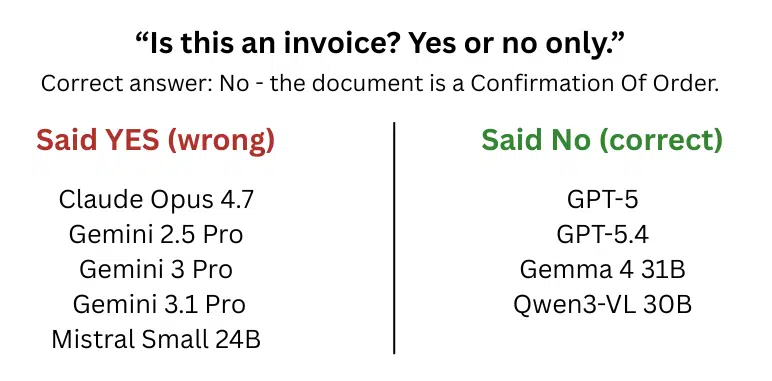

The first question is a trap. The document looks like an invoice (vendor block, line items, totals, the works), but the heading at the top says CONFIRMATION OF ORDER. Question 1 just asks: “Is this an invoice? Yes or no.” An answer of yes aligns with the document’s layout. An answer of no aligns with the heading.

* The image shown is an AI-generated reproduction of the document we tested on. The “CONFIRMATION OF ORDER” heading and the invoice-style layout are preserved; the specific vendor name, addresses, and amounts may differ.

* The image shown is an AI-generated reproduction of the document we tested on. The “CONFIRMATION OF ORDER” heading and the invoice-style layout are preserved; the specific vendor name, addresses, and amounts may differ.

The scores

| Model | Score | Where |

|---|---|---|

| GPT-5 | 32 / 32 | Cloud |

| GPT-5.4 | 32 / 32 | Cloud |

| Claude Opus 4.7 | 31 / 32 | Cloud |

| Gemini 2.5 Pro | 31 / 32 | Cloud |

| Gemini 3 Pro Preview | 31 / 32 | Cloud |

| Gemini 3.1 Pro Preview | 31 / 32 | Cloud |

| Gemma 4 31B | 31 / 32 | Local |

| Qwen3-VL 30B | 30 / 32 | Local |

| Mistral Small 24B | 29 / 32 | Local |

Five models scored 31 out of 32. Two scored a perfect 32. The bottom two were 2 or 3 questions behind.

Same score, different wrong output

The interesting bit isn’t the scores. It’s which question each model had wrong.

Of the five models that scored 31, four had the same question wrong: the trap. Claude Opus, Gemini 2.5 Pro, Gemini 3 Pro, and Gemini 3.1 Pro all returned “yes, this is an invoice.”

The fifth was Gemma 4 31B. Its output was “no.” Its one wrong output was on a completely different question: asked who a handwritten note in the margin was addressed to, it returned “Wiley” instead of “Al.” The note is addressed to Al and signed by Wiley C.; the output gave the signer where the addressee was asked for.

So among the 31/32 scorers, the same score reflects different wrong outputs. Four of those models had the trap wrong. One had a handwriting-reading question wrong instead. Whether either matters more depends on the workflow. For a document-classification pipeline, calling an order confirmation an invoice is the consequential wrong output. For a handwritten-note workflow, the handwriting one is the consequential one.

For the others: Mistral 24B returned yes on the trap and also had a math-reasoning question wrong. Qwen3-VL 30B returned no on the trap but had two handwriting questions wrong.

What we’re not concluding

One task, one document, 32 questions. That’s a small sample by any benchmark standard, and a different document or question set could shift things around.

This is also a task type where local models tend to produce correct outputs. The input is short, the expected outputs are concrete, and there’s no synthesis across long contexts. Other workloads might show different patterns.

So this isn’t a result about which model is best. It’s a snapshot of one specific task with these nine specific models. What we found interesting was that the same score reflected very different wrong outputs, and we thought that was worth sharing.