AI is already making decisions that affect your customers, your employees, and your bottom line. It is used to approve loans, screen job applications, forecast demand, and route customer service requests, often without a human in the room.

AI is making decisions on behalf of humans in almost every sector, department, and process. But most organizations have no structured way to oversee how those decisions are made, who is accountable when they go wrong, or whether the systems producing them were ever tested for fairness and accuracy.

That gap has a cost. According to EY’s 2025 Responsible AI Pulse survey of 975 C-suite leaders across 21 countries, nearly all, i.e., 99% of organizations surveyed, reported financial losses from AI-related risks, with average damages conservatively estimated at $4.4 million per company.

AI governance is the structure that closes that gap. Without it, organizations scale AI at the speed of adoption and manage the consequences at the speed of crisis. With it, they scale with confidence.

This article explains what it is, what it covers, and why enterprises that treat it as a strategic priority are pulling ahead of those that don’t.

What Is AI Governance?

Think of AI governance the way you would think of financial controls inside a company. No responsible organization lets revenue flow in and out without policies, audit trails, and accountability structures. AI decisions, which now touch hiring, lending, pricing, and operations, deserve the same treatment.

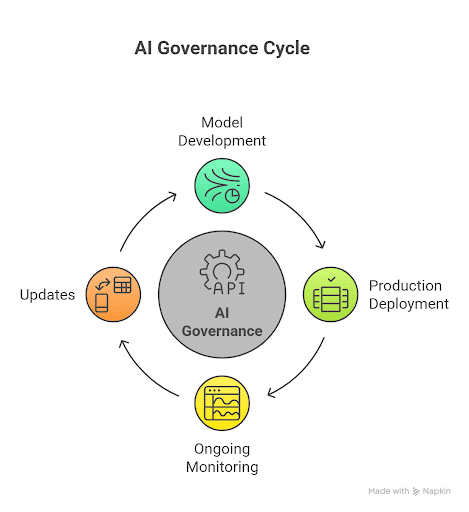

AI governance is the set of policies, processes, and oversight mechanisms used to manage AI systems across their entire lifecycle, from initial model development through deployment, monitoring, and eventual retirement. It encompasses the guardrails that help ensure AI tools and systems are and remain safe, ethical, and aligned with organizational and societal values.

Also Read: Enterprise AI Roadmap: The Complete 2026 Guide

What separates AI governance from simply “having an AI policy” is scope. Governance applies at every stage:

- Model development: Defining what data is used, how bias is tested for, and what standards a model must meet before it is approved for deployment.

- Production deployment: Establishing who approves AI systems for use, what documentation is required, and how integration with existing infrastructure is managed.

- Ongoing monitoring and updates: Continuously tracking model performance, detecting drift, and flagging outputs that deviate from expected behavior as real-world conditions change.

Governance also draws on adjacent disciplines such as data governance, security governance, and risk management.

- Data governance determines what data AI systems can access and how it is handled.

- Security governance addresses how AI integrates with sensitive systems and what vulnerabilities it introduces.

- Risk management establishes how AI-related risks are identified, categorized, and escalated.

Enterprise AI governance combines all three into a coherent oversight structure.

Why AI Governance Matters for Enterprises

EY’s 2025 Responsible AI Pulse survey found that only 12% of C-suite executives can correctly identify the appropriate controls for common AI risks. Lack of knowledge about the technology isn’t a culprit here. This is a clear case of a governance gap.

EY’s survey shows that most of the companies surveyed allow their employees to develop or deploy AI agents. Yet 40% of these companies report having no formal, organization-wide policies and frameworks aligned with responsible AI principles. The survey puts non-compliance as the biggest AI risk organizations face today. The reasons are quite obvious.

Also Read: Agentic AI vs Generative AI: What Enterprises Need to Know

The Shift Has Happened

Five years ago, AI governance was a concern for data science teams. Today, it is a boardroom conversation and in many organizations, a regulatory requirement.

The shift reflects where AI has moved inside the enterprise. Organizations are no longer running AI as a parallel experiment in an innovation lab. AI now influences decisions with direct business and human consequences: which candidates advance in a hiring process, which customers are offered credit, how operational risk is assessed, and how customer service interactions are resolved.

Financial Consequences of AI-Related Risks

When those decisions go wrong, the consequences are not theoretical. EY survey found that 64% of organizations experienced AI-related losses exceeding a million. The business case for governance does not rest only on risk avoidance. PwC’s 2025 US Responsible AI Survey had 58% of executives say responsible AI initiatives improve return on investment and organizational efficiency, and 55% report improvements in customer experience and innovation.

Organizations at the most advanced stage of governance are 1.5 to 2 times more likely to describe their governance capabilities as effective than those still building foundational policies. Governance, in short, is not a brake on AI adoption. It is the structure that allows adoption to scale.

- AI governance is the oversight structure that manages AI systems from development through monitoring and retirement. It is not a one-time compliance exercise.

- Organizations with mature governance programs report stronger ROI, better customer outcomes, and significantly fewer costly AI incidents.

- 99% of organizations in EY’s 2025 survey reported AI-related financial losses, making governance a direct business priority, not a theoretical one.

The Risks of Operating AI Without Governance

An AI system without governance is one that makes consequential decisions without accountability, auditability, or correction mechanisms. Four categories of risk define what that looks like in practice.

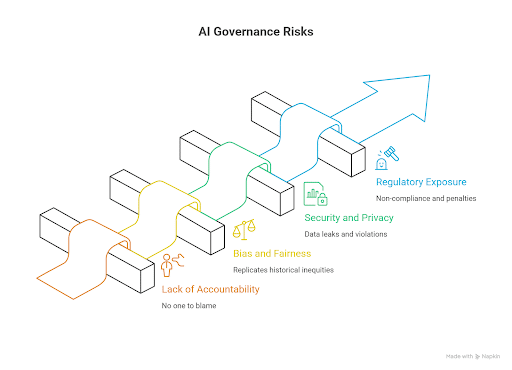

1. Lack of Accountability

When an AI model produces a harmful or incorrect output, such as a biased hiring recommendation, an inaccurate fraud flag, or a flawed credit denial, organizations without governance structures frequently cannot determine who is responsible or why the system behaved as it did. There is no owner, no audit trail, and no escalation path.

2. Bias and Fairness Failures

AI models learn from historical data. When that data reflects historical inequities, models trained on it replicate and often amplify those inequities. In regulated domains such as lending, insurance, employment, and healthcare, biased outputs entail legal exposure and reputational damage. EY’s 2025 survey cited biased outputs as among the three most commonly reported AI risks across organizations, regardless of sector or size.

3. Security and Privacy Exposure

AI systems process and generate data continuously, often integrating with sensitive internal systems. Without governance policies for data access, model inputs, and output handling, organizations face heightened exposure to data leaks, unauthorized model use, and privacy violations, including under regulations that impose direct financial penalties.

4. Regulatory Exposure

The EU AI Act, GDPR, and a growing body of sector-specific AI regulations are establishing enforceable requirements around transparency, risk classification, and documentation. Deloitte’s 2025 survey of 695 board members and C-suite executives across 56 countries found that preparedness has not improved in the critical area of risk and governance, even as it has improved in other areas.

None of these risks is hypothetical. They are documented outcomes from real deployments across industries and organizations of all sizes.

Key Elements of Effective AI Governance

Governance is not a single tool or a single policy document. It is a set of interconnected capabilities that together keep AI systems accountable, auditable, and aligned with organizational objectives.

1. Policy and Oversight

Organizations define explicit rules governing how AI systems can be developed, what data they can use, what testing is required before deployment, and what outputs are acceptable. Without a written policy, governance exists only as an informal expectation. In the real world, that cannot be called governance.

2. Accountability and Ownership

Clear roles are assigned for approving models, monitoring production performance, and addressing risks when they materialize. In practice, this means designated AI owners at the business unit level, an oversight committee or equivalent body, and defined escalation paths.

3. Transparency and Explainability

AI systems must be documented and auditable. Stakeholders, including regulators, customers, and internal reviewers, need to understand how decisions are made, what data drives them, and where the model’s limits lie. This is not only an ethical principle but increasingly a legal requirement under frameworks like the EU AI Act.

4. Continuous Monitoring

AI models are not static. Data distributions shift, real-world conditions change, and model behavior can drift significantly from what was tested in development. Governance requires ongoing evaluation to track accuracy, bias indicators, and output quality. It is not just a one-time sign-off before deployment.

5. Human Oversight at Defined Decision Points

Not every AI decision should run without human review. Governance frameworks establish where human oversight is required. Human oversight is needed particularly in high-stakes domains like healthcare, finance, and hiring, and building those checkpoints into operational workflows.

| Governance Element | What It Does | What Breaks Without It |

|---|---|---|

| Policy and oversight | Defines rules for AI development and deployment | Ad-hoc decisions, inconsistent standards across teams |

| Accountability and ownership | Assigns roles for approval, monitoring, and risk escalation | No owner when outputs cause harm or regulatory scrutiny arrives |

| Transparency and explainability | Documents how decisions are made and keeps them auditable | Regulatory exposure, loss of stakeholder and customer trust |

| Continuous monitoring | Tracks model performance and detects drift over time | Silent degradation, undetected bias accumulation in production |

| Human oversight | Defines where human review is required in high-stakes decisions | Consequential decisions made without appropriate human judgment |

Challenges Organizations Face When Implementing AI Governance

Most organizations recognize that governance matters. The gap is between recognition and execution. Four challenges account for the majority of implementation failures.

1. AI Adoption Outpacing Oversight

AI deployment in many enterprises has been decentralized and fast. Individual teams adopt tools, build models, and deploy agents without coordinated oversight. EY’s 2025 survey found that two-thirds of companies allow “citizen developers” to independently deploy AI agents, yet only 60% have formal organization-wide policies for responsible use, and half report limited visibility into how those agents are actually being used.

2. Limited Internal Expertise

AI risk management requires a combination of technical knowledge, legal understanding, and operational judgment that most organizations do not have concentrated in a single function. PwC’s 2025 Responsible AI Survey identified operationalization. i.e., turning responsible AI principles into scalable, repeatable processes, as the biggest hurdle, cited by half of the respondents.

Also Read: Enterprise AI Adoption Challenges Explained: Data, Integration, ROI & Governance

3. Unclear Ownership Between Functions

AI governance requires coordination across engineering, compliance, legal, HR, and leadership. Without clear ownership, each function defers to the others, and governance stalls. Deloitte’s 2025 survey found that 66% of boards still report limited to no knowledge or experience with AI. This is an improvement from 79% in the prior year, but still a significant oversight gap at the leadership level.

4. Evolving Regulatory Requirements

The regulatory landscape for AI is not stable. New requirements under the EU AI Act, sector-specific guidance in financial services and healthcare, and evolving data privacy obligations create a moving compliance target. Organizations that treat governance as a fixed policy document rather than an adaptive program will find themselves out of compliance before the next review cycle.

These challenges do not cancel each other out. They compound. A team with limited expertise, unclear ownership, and fast-moving decentralized deployment faces a governance gap that grows every quarter without deliberate intervention.

Global AI Governance Frameworks Enterprises Should Know

One practical way to approach AI governance is through the major frameworks that have emerged to standardize it. These are not substitutes for internal governance. Consider them as reference architectures that give organizations a structured starting point and, in some cases, enforceable compliance obligations.

1. NIST AI Risk Management Framework (AI RMF)

Developed by the US National Institute of Standards and Technology, the NIST AI RMF is organized around four core functions: Govern, Map, Measure, and Manage. It is sector-agnostic, voluntary, and designed to integrate with existing enterprise risk programs. For organizations operating in or with US federal agencies, it is the closest thing to an industry standard.

2. EU AI Act

The EU AI Act is the most comprehensive binding AI regulation currently in force globally. It classifies AI systems by risk level, such as unacceptable, high, limited, and minimal, and imposes requirements for transparency, human oversight, documentation, and conformity assessments on high-risk systems. High-risk categories include AI used in hiring, credit scoring, healthcare, critical infrastructure, and law enforcement. For any organization operating in or selling into the EU, compliance is not optional.

3. ISO/IEC 42001

The first international standard for AI management systems, ISO 42001, provides a certifiable governance framework aligned with other ISO management systems. Organizations already certified under ISO 27001 or ISO 9001 will find the structure familiar. It is particularly relevant for demonstrating governance maturity to enterprise customers and regulators.

4. GDPR

The General Data Protection Regulation governs how personal data is collected, processed, and used across the EU and by organizations handling EU residents’ data. AI systems that process personal data, which includes most enterprise AI deployments, fall within GDPR’s scope.

Compliance requires a lawful basis for processing, data minimization, purpose limitation, and the right to explanation for automated decisions that significantly affect individuals.

5. Singapore Model AI Governance Framework

Singapore’s framework, with a generative AI-specific edition, was released in May 2024. It addresses AI ethics in the private sector and provides practical guidance on operationalization. It is particularly relevant for organizations with Asia-Pacific operations.

Organizations do not choose a single framework. Most enterprises operating across geographies need to align with several simultaneously, which is precisely why governance infrastructure must be adaptive.

Governance Readiness Checklist

| Readiness Factor | In Place | Partial | Not Yet |

|---|---|---|---|

| Written policy governing AI development and deployment | □ | □ | □ |

| Designated ownership for each AI system in production | □ | □ | □ |

| Documented model inventory with risk classification | □ | □ | □ |

| Bias and fairness testing conducted before deployment | □ | □ | □ |

| Continuous monitoring in place for production models | □ | □ | □ |

| Defined escalation path for AI-related incidents | □ | □ | □ |

| Cross-functional governance committee or equivalent body | □ | □ | □ |

| Alignment with applicable regulatory frameworks (EU AI Act, GDPR, NIST) | □ | □ | □ |

AI Governance Is the Foundation of Trustworthy Enterprise AI

Trust is becoming the defining factor in enterprise AI adoption, not just with regulators, but with customers, employees, and partners. Organizations with real-time AI monitoring are 34% more likely to see improvements in revenue growth and 65% more likely to see improved cost savings compared to those without it (EY, October 2025).

Organizations that have built governance infrastructure are scaling AI with confidence. Those still treating it as a future priority are accumulating risk on every deployment they make today.

RTS Labs works with mid-market and enterprise organizations to build the governance infrastructure that makes AI adoption sustainable. We help our partners with:

- Defining accountability structures and ownership models for AI systems already in production

- Building model documentation and monitoring frameworks that meet regulatory requirements, and

- Establishing the cross-functional governance processes that keep AI programs aligned with business objectives as they scale.

For organizations moving from AI experimentation to AI operations, governance is not the final step. It is what makes the transition possible.

Frequently Asked Questions (FAQs)

1. What Is the Difference Between AI Governance and AI Ethics?

Ethics defines the principles of fairness, transparency, accountability, and non-harm. AI governance is the operational structure that puts those principles into practice. It includes the policies, roles, monitoring tools, and audit processes that make ethical commitments enforceable rather than aspirational.

2. What Role Do Regulations Play in AI Governance?

Regulations like the EU AI Act and GDPR set minimum requirements for transparency, documentation, and risk management. Governance frameworks organize internal capabilities to meet those requirements and ideally exceed them, since regulatory floors tend to lag what well-run programs already do.

3. How Does AI Governance Help Prevent Model Bias?

Governance requires bias testing before deployment, ongoing monitoring of model outputs for demographic disparities, and defined processes for retraining or retiring models that produce discriminatory results. Without those structures, bias is often invisible until it produces a public failure or a regulatory finding.

4. What Role Does Model Monitoring Play in AI Governance?

Monitoring is how governance maintains accountability after deployment. Models degrade, data distributions shift, and outputs that were accurate at launch can become unreliable or biased over time. Continuous monitoring detects that drift and triggers the review and correction processes that governance frameworks define.

5. How Does AI Governance Apply to Generative AI Systems?

Generative AI introduces governance challenges that traditional ML models do not. Hallucinated outputs, uncontrolled content generation, and integration with public-facing systems can amplify failures at scale.

Governance for generative AI requires output monitoring, human review gates for high-stakes use cases, clear policies on data used for training and fine-tuning, and defined escalation procedures when models produce harmful or inaccurate content.