Enterprise AI adoption is rising rapidly, but success rates aren’t. A study by S&P Global finds that 42% of companies scrap their AI initiatives, and 46% of those projects get scrapped between PoC and production.

Most organizations run into the same blockers. Siloed data, integration complexity, unclear ownership, weak governance, and resistance to workflow change adversely impact their adoption and deployment. The result is costly pilots that never see production.

This guide breaks down the nine biggest enterprise AI adoption challenges and provides practical, engineering-first strategies to overcome them. We will also discuss how RTS Labs helps enterprises turn stalled AI initiatives into scalable, ROI-driven systems.

AI Adoption Challenges Enterprises Face During Project Lifecycle

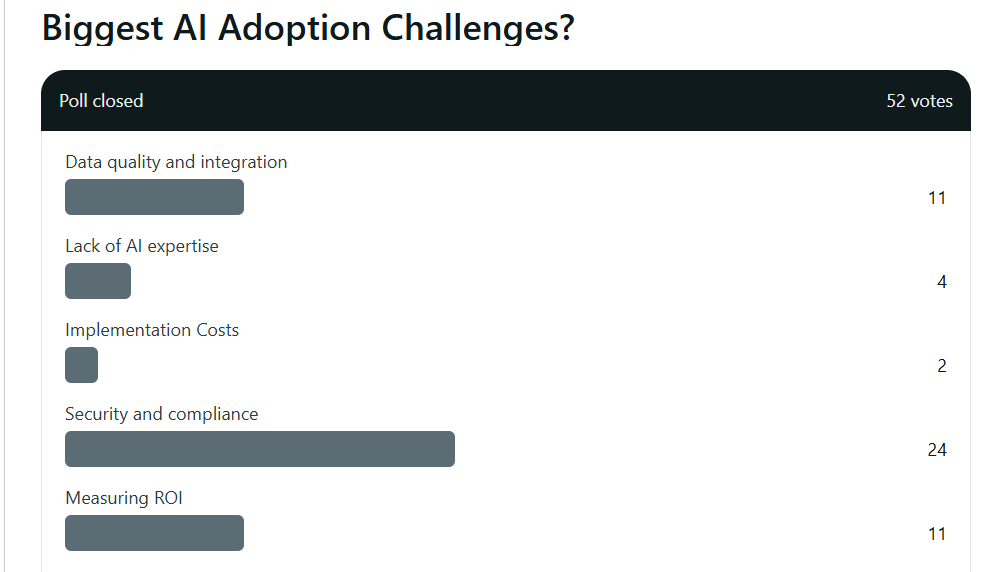

Enterprises face a consistent pattern of AI roadblocks. A Reddit poll puts data quality, security and compliance, and measuring ROI as the top three challenges enterprises face in their AI adoption journey.

Below is a concise overview of the challenges this guide explores and why each one prevents AI from scaling beyond isolated pilots.

#Challenge 1: How Do Poor Data Readiness and Fragmented Data Ecosystems Impact AI Adoption?

For most enterprises, AI failures can be traced back to one root cause, i.e., their data foundation isn’t ready for AI. 63% of organizations are unsure if they have the right data management practices needed for AI, says Gartner.

Even when companies have large volumes of data, it is often siloed across ERPs, CRMs, data warehouses, on-prem systems, spreadsheets, and third-party platforms. Inconsistent schemas, missing metadata, poor lineage tracking, and a lack of governance create an environment where AI models cannot learn, predict, or scale reliably.

When AI relies on fragmented or untrusted data, results become unstable. Predictions drift, workflows break, and stakeholders lose confidence in the system, causing leadership to halt or delay AI rollout across business units.

Here’s a tabular representation of the consequences of poor data readiness for enterprises during AI development:

| Impact Area | Business Risk |

|---|---|

| Model Accuracy | Incorrect predictions due to inconsistent or incomplete data |

| Operational Reliability | AI workflows break when upstream data changes |

| Compliance Exposure | Missing lineage and auditability lead to regulatory risk |

| Scaling Difficulty | AI cannot be reused across teams because data isn’t unified |

| Cost Overruns | Rework, manual cleaning, and repeated model training inflate costs |

How to Mitigate This Challenge

A structured data foundation is the fastest way to reduce AI risk and increase production readiness. Enterprises should:

- Unify data sources into a governed warehouse or lakehouse for a single source of truth across departments.

- Implement data quality pipelines covering validation, anomaly detection, and automated corrections.

- Enable end-to-end lineage and metadata tracking so teams understand where data originates and how it transforms.

- Introduce strict access and entitlement controls to meet privacy, compliance, and audit standards.

- Adopt interoperability standards that ensure ERP, CRM, financial systems, and operational databases can exchange data reliably.

These steps help enterprises achieve a stable, governed, and scalable data layer, which is essential for enterprise AI systems to operate consistently.

#Challenge 2: Inability to Scale From Pilot to Production

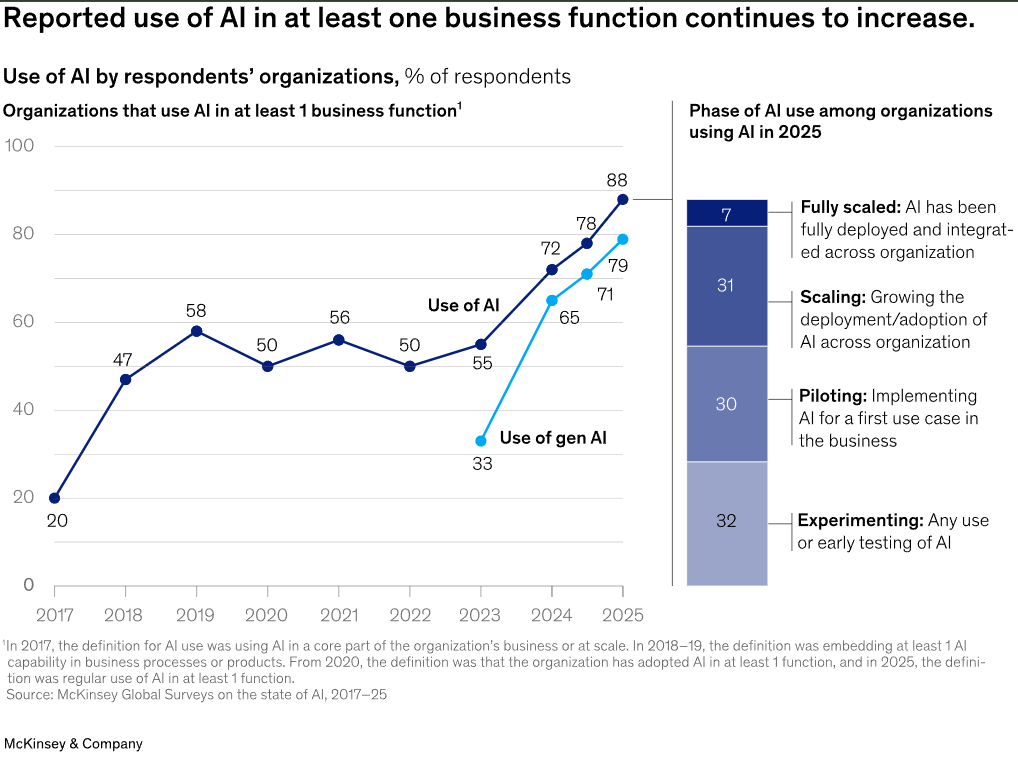

Even when pilots show promise, most enterprises struggle to move AI into real-world operations. McKinsey’s research shows that only 1/3rd of AI pilots make it into production, largely because organizations underestimate the engineering, infrastructure, and governance required to operationalize AI at scale.

Pilots are often built in isolated sandboxes with temporary data extracts, manual workflows, and assumptions that do not hold once AI interacts with live systems, compliance requirements, or cross-team business processes.

This pilot trap keeps enterprises stuck in experimentation mode, spending time and money on POCs that never generate business value. Without the ability to industrialize AI, companies face rising costs, declining stakeholder confidence, and lost momentum across departments that were eager for AI-driven transformation.

There are many consequences when AI projects fail to scale

| Impact Area | Business Risk |

|---|---|

| Operationalization Failures | AI cannot be embedded into real workflows, causing projects to stall |

| Wasted Budgets | POCs consume resources but fail to deliver ROI |

| Loss of Executive Confidence | Leadership begins questioning AI investments |

| Shadow AI Builds | Teams create ungoverned, inconsistent models to bypass constraints |

| No Enterprise-Wide Value | Gains remain isolated instead of compounding across functions |

How to Overcome This Challenge

Scaling requires a fundamentally different approach than prototyping. Enterprises should:

- Standardize MLOps and deployment frameworks to automate CI/CD for models, versioning, testing, and rollout pipelines.

- Design reusable model components to prevent teams from building one-off experiments that cannot be extended.

- Create enterprise-wide data contracts and SLAs to maintain consistency of inputs for all AI models.

- Integrate AI into core operational systems early, including ERPs, CRMs, financial systems, supply chain platforms, and internal applications.

- Define production KPIs and monitoring metrics to track drift, performance, and accuracy in real-world conditions.

- Establish cross-functional ownership involving business, engineering, and compliance teams in production rollout.

These steps help enterprises move beyond proofs of concept and create AI systems that deliver sustained business outcomes.

#Challenge 3: How do Integration Complexities Of Legacy Enterprise Systems Deter AI Adoption?

Once enterprises move past pilots, the next and often biggest barrier is integration. AI doesn’t create value in isolation. It must plug into ERP systems, CRMs, data warehouses, billing platforms, HRIS, supply chain systems, IoT infrastructure, and proprietary legacy apps that were never designed to support modern AI workloads.

AI may function well in a controlled environment. But in production, it must interface with live workflows, security layers, APIs, messaging queues, and operational systems, all while maintaining performance, auditability, and reliability.

For enterprises with decades-old infrastructure, this becomes a significant challenge. Legacy systems often lack modern APIs, rely on batch-based processing, run on mainframes or monolithic architectures, and store data in inconsistent formats. AI integration in these environments becomes a major architectural transformation effort.

| Impact Area | Business Risk |

|---|---|

| Data Silos Persist | AI models fail due to incomplete or inconsistent inputs |

| High Implementation Costs | Long engineering cycles and workarounds inflate budgets |

| Security Vulnerabilities | Weak integration points increase exposure to cyber risks |

| Workflow Breakdowns | AI outputs cannot trigger downstream systems reliably |

| Slow Time-to-Value | AI initiatives take months or years instead of weeks |

How to Mitigate This Challenge

A scalable AI strategy requires architectural modernization paired with pragmatic integration choices:

1. Introduce an API-first, microservices-friendly ecosystem

Wrap legacy systems with APIs or middleware to make data accessible without rewriting core applications.

2. Use an enterprise integration layer (middleware, ESB, iPaaS)

This centralizes message routing, data transformation, and workflow orchestration.

3. Modernize data flows gradually

Shift batch workflows to event-driven or near-real-time pipelines (Kafka, Kinesis, Pub/Sub) to support AI’s data needs.

4. Implement strong enterprise data governance

Standardized schemas, data contracts, and lineage tracking ensure consistency across all systems AI interacts with.

5. Build AI into operational systems early

Instead of running in separate environments, integrate models directly into SAP, Salesforce, NetSuite, ServiceNow, Workday, or internal platforms.

6. Prioritize security & compliance in the integration layer

TLS, RBAC, secrets management, and audit logs should be embedded into every connection point.

With this structured approach, enterprises can overcome legacy constraints without undergoing costly full-system replacements.

#Challenge 4: Why Do Enterprises Struggle With Unclear ROI and Business Case in Their AI Adoption Journey?

Even when enterprises strongly believe in AI, many struggle to translate their ambition into measurable business outcomes. This is one of the biggest reasons AI projects lose momentum.

What happens next is that leadership doesn’t see a clear path to ROI, teams are unsure what KPIs to target, and AI initiatives often feel disconnected from core business objectives.

When AI is framed as an innovation experiment instead of a business driver, it becomes vulnerable to budget cuts, skepticism from stakeholders, and prioritization conflicts.

Most enterprises fall into these common traps:

- Choosing use cases based on hype

- Launching pilots with no success metrics

- Investing in models before knowing how they will generate savings or revenue.

Without a business case, AI remains a cost center and not a growth engine. Unclear ROI can land a good AI project in a dump, and can have multiple consequences:

| Issue | Business Impact |

|---|---|

| No clear KPIs | Stakeholders cannot measure or justify value |

| Misaligned priorities | AI teams build solutions no one asked for |

| Budget overruns | Projects drag on without demonstrating outcomes |

| Poor adoption | Business units see AI as irrelevant to their goals |

| Loss of executive support | AI becomes “experimental spend” rather than a strategic investment |

How to Mitigate This Challenge

A strong AI program begins with a business-first strategy, not a technology-first mindset. Enterprises can eliminate ROI ambiguity by:

1. Linking AI initiatives directly to P&L outcomes

Every use case must map to revenue growth, cost reduction, risk mitigation, or customer experience improvement.

2. Defining measurable KPIs before building anything

Some examples of how you can define KPIs for any AI project include:

- Reduce manual processing time by 40%

- Improve forecasting accuracy by 12–15%

- Automate 30% of repetitive back-office tasks

3. Building a prioritization framework (Impact × Feasibility)

Score use cases on business impact, data readiness, compliance effort, engineering complexity, and time-to-value.

4. Starting with ‘quick-win, high-ROI’ use cases

These deliver visible value in 8–12 weeks, helping gain internal trust and momentum.

5. Creating an AI Value Realization Dashboard

This aligns executives, tracks KPIs, and ensures ongoing ROI attribution.

6. Embedding ROI into the model lifecycle

Monitoring dashboards should capture cost-to-serve, drift impact, performance gains, and utilization rates.

When enterprises view AI as a measurable business transformation initiative, they achieve far more consistent and predictable returns.

#Challenge 5: How Do Talent Gaps Across AI, Data, and Engineering Teams Impact AI Implementation?

Even with strong executive buy-in and promising AI use cases, many enterprises discover a stark reality that they lack the talent mix required to build, deploy, and maintain AI at scale. Rather than requiring a single skill, AI is an ecosystem of competencies spanning data engineering, ML development, infrastructure, security, product thinking, and change management. Most organizations have pockets of expertise, but not the end-to-end capability needed for enterprise-grade AI.

A report by IDC finds that 46% of enterprises report a shortage of AI-skilled talent as one of the top barriers to adoption. Enterprises often have data scientists and analysts, but not the production engineers needed to turn notebooks into reliable systems or the architects who can design scalable pipelines.

This misalignment results in slow delivery cycles, fragile prototypes, security risks, and AI systems that never make it past experimental phases:

| Problem | Business Impact |

|---|---|

| Understaffed data engineering teams | Bottlenecks in data pipelines delay every AI project |

| Lack of MLOps expertise | Models never reach production or break soon after deployment |

| No cross-functional collaboration | AI solutions fail to meet business needs |

| Dependence on isolated ‘AI champions’ | Knowledge risk when key individuals leave |

| Slow iteration cycles | Competitors with stronger AI teams pull ahead |

| Overreliance on vendors | Inability to maintain or scale AI internally |

AI becomes a cycle of POCs that cannot survive in real-world environments, a common failure pattern documented across enterprise AI studies.

How to Mitigate This Challenge

1. Build multidisciplinary AI squads

Successful AI requires teams that blend:

- Data engineers

- ML engineers

- Cloud and platform architects

- Product managers

- Domain experts

- Governance and compliance leads

This replaces fragmented roles with shared ownership and drastically improves deployment speed.

2. Upskill existing teams with targeted training

Focus on priority areas:

- MLOps fundamentals

- Cloud-native AI deployment

- LLMOps and agentic workflows

- Data governance and observability

Upskilling helps enterprises reduce dependency on external vendors over time.

3. Partner with AI engineering specialists

Specialists accelerate delivery and fill critical gaps, especially for:

- Systems integration

- Scalable pipeline design

- RAG/LLM deployment

- Production monitoring and drift management

Instead of hiring for every role, which is impossible in current market conditions, enterprises supplement internal teams strategically.

4. Adopt an internal AI Center of Excellence (CoE)

A CoE ensures:

- Standardized best practices

- Cross-department knowledge sharing

- Governance consistency

- Faster, repeatable deployment patterns

It turns AI into an institutional capability, aligning and bringing together fragmented projects.

5. Use frameworks and reusable assets

Reusable prompt libraries, feature stores, templates, and architecture patterns reduce resource load, speed up iterations, and maintain consistency.

#Challenge 6: What Are the Governance, Security, and Compliance Risks Enterprises Face?

Even the most sophisticated AI systems collapse under weak governance. As enterprises scale AI, they face a widening risk surface. Lack of governance raises concerns like data privacy exposure, model bias, regulatory scrutiny, and uncontrolled model behavior. A Gartner survey found that AI governance remained among the top 3 challenges for organizations.

AI systems rely on sensitive data, complex decision logic, and dynamic model behavior, which makes risk management exponentially harder than in traditional analytics or software. Without proper governance frameworks, enterprises face:

- Regulatory penalties

- Biased decisions affecting customers or employees

- Data leaks through poorly secured models or APIs

- Rogue AI outputs undermining brand trust

- Loss of audit trail and explainability

- Inability to meet requirements under GDPR, HIPAA, SOX, or emerging AI Acts

Weak governance quickly erodes executive confidence, stalls adoption, and can shut down AI initiatives entirely.

The table below jots down reasons and consequences of Governance Failures:

| Risk Area | Operational Consequence |

|---|---|

| Lack of model explainability | Business leaders cannot trust or approve AI-driven decisions |

| Missing audit trails | Compliance teams block deployment |

| Poor access controls | Sensitive data leaks into prompts or training sets |

| Bias and fairness issues | Legal exposure + reputational damage |

| No drift monitoring | Model accuracy degrades silently in production |

| Security gaps in pipelines | Attackers exploit APIs, LLM prompts, or data channels |

| Unclear accountability | No one owns the outcome when AI makes an error |

When these risks accumulate, AI transitions from an innovation opportunity to an organizational liability.

How Enterprises Can Mitigate Governance and Security Barriers

1. Establish an AI Governance Framework Early

A standardized framework should define data usage rules, model documentation templates, RAI (Responsible AI) standards, approval workflows, and role-based access controls. Embedding governance into lifecycle processes reduces rework and risk.

2. Implement Model Transparency & Explainability Tools

Use techniques and tooling such as SHAP, LIME, or integrated explainability modules, decision trace logs, confidence scoring, and model cards for compliance teams to audit, validate, and approve AI outputs.

3. Build Mandatory Security Layers

AI systems must be protected just like enterprise applications, including prompt security for LLMs, secrets and token management, API hardening, data masking and anonymization, and network segmentation. AI-specific threats, e.g., prompt injection and model poisoning, require proactive defense.

4. Deploy Continuous Monitoring & Drift Management

Automated checks should track data drift, concept drift, unusual inference patterns, and performance and accuracy metrics to prevent silent failures and maintain trust in AI decisions.

5. Align With Regulatory and Ethical Standards

Enterprises should map AI workflows to frameworks like GDPR, CCPA, HIPAA, SOX, NIST AI RMF, ISO/IEC 42001 (AI Management System), and the emerging EU AI Act to make sure enterprise AI programs are future-proof and compliant.

#Challenge 7: What are the Organizational Resistance and Change Management Challenges Enterprises face in their AI Adoption Journey?

Even with strong models, clean data, and modern infrastructure, AI adoption fails when people don’t change their behavior. The biggest barrier to enterprise AI success is no longer technology but organizational readiness and user adoption.

Employees often mistrust AI recommendations, resist new workflows, or feel threatened by automation. Leaders may support AI in theory, but struggle to drive change across departments. Without structured change management, AI becomes an unused tool.

A few reasons why behavioral resistance impacts scaling AI projects:

| Behavioral Challenge | Business Impact |

|---|---|

| Employees don’t trust AI outputs | AI insights ignored; model ROI drops to zero |

| Teams fear job displacement | Adoption slows, morale declines |

| Leaders fail to evangelize AI | No cross-departmental alignment |

| Lack of training & enablement | Users don’t understand how to use AI tools |

| Divided ownership | AI initiatives stall due to unclear accountability |

| No process redesign | AI gets layered onto broken workflows and fails |

How Enterprises Can Mitigate Organizational Resistance

1. Treat AI as a Change Management Program

AI adoption requires shifts in processes, decision-making, roles, and team responsibilities. Enterprises must manage AI rollouts like major business transformation programs.

2. Build Clear Ownership and Cross-Functional Alignment

Define who owns AI outcomes, data quality, and workflow governance. Performance monitoring to prevent confusion and ensure accountability.

3. Establish Early Wins to Build Trust

Small, high-impact pilots increase confidence and create internal momentum.

Examples include automated reporting, agent-assisted customer support, and predictive alerts to prove value quickly and reduce skepticism.

4. Invest in User Training, Enablement & Literacy

Training should include parameters such as how the model works, when to trust AI recommendations, how to escalate anomalies, and how to interpret outputs. Upskilling builds comfort, confidence, and responsible usage.

5. Communicate Transparently About the Role of AI

Leaders must dispel fears by emphasizing to employees that AI augments people, not replaces them, new roles will emerge, and team expertise is still critical. Without transparency, employees will assume the worst.

#Challenge 8: Why Following a Tools-First Approach Instead of a System-Level AI Strategy Can Be Harmful?

One of the biggest and most expensive reasons enterprise AI adoption fails is the tendency to chase tools before defining strategy. Companies rush to deploy LLM copilots, analytics platforms, automation tools, or vendor demo models because they appear fast and easy. But without aligned business goals, data foundations, integration pathways, and governance, these “quick wins” rarely scale.

This creates ‘the AI Fragmentation Trap’ where dozens of disconnected tools, overlapping capabilities, rising costs, and no measurable enterprise-wide value.

<

| Root Issue | Impact on the Enterprise |

|---|---|

| AI tools adopted in silos | No cross-functional synergy; duplicate investments |

| Lack of alignment with KPIs | Tools show “activity” but no real ROI |

| Vendors overpromise ease of deployment | Enterprises underestimate integration & data requirements |

| No architecture or governance roadmap | Security gaps, poor reliability, inconsistent results |

| Tools don’t integrate into workflows | Employees abandon them; low adoption rates |

| No scalability plan | Pilots succeed but fail in full-scale rollout |

How Enterprises Can Mitigate This Challenge

1. Begin With Business Problems, Not Technology Options

Successful AI adoption starts with clarity. Ask questions such as:

- What decisions need to be automated?

- Which workflows need improvement?

- Where does the business lose time, money, or accuracy?

2. Build an Enterprise AI Architecture Before Selecting Tools

A scalable AI strategy requires unified data layers, reusable model components, API-first design, governance and monitoring, and shared infrastructure for the tools to plug into a stable ecosystem.

3. Evaluate Tools on Integration Capability, Not Vendor Hype

Some key questions enterprises should ask:

- Does it integrate with ERP/CRM/Supply Chain systems?

- Does it support enterprise security and compliance?

- Does it provide APIs for orchestration?

- Does it fit into the existing data environment?

- Is the vendor cloud-, stack-, and model-agnostic?

4. Standardize Governance Before Tool Adoption

This includes access controls, data lineage and versioning, validation pipelines, drift detection, bias and fairness guidelines, and retention and audit policies. With governance in place, tools remain reliable and compliant.

5. Sequence Adoption With an Enterprise AI Roadmap

Instead of adopting tools randomly, enterprises should:

- Choose 3–5 high-ROI use cases

- Prioritize those aligned with KPIs

- Build shared infrastructure early

- Integrate models into existing workflows

- Scale horizontally once the foundations are stable.

#Challenge 9: How Enterprises Face Difficulty Maintaining Model Accuracy in Real-World Conditions?

Even when enterprises successfully deploy AI models, enterprises struggle with keeping those models accurate, reliable, and aligned with changing business conditions. Real-world environments introduce noise, new data patterns, shifting user behavior, and operational drift, all of which can degrade model performance over time. Many AI systems that work well during pilots begin to fail silently once they reach production.

A few reasons why this challenge occurs and the subsequent consequences:

| Root Cause | Impact on AI Model Performance |

|---|---|

| Data drift, input data changes over time | Predictions become inaccurate or biased |

| Concept drift, business patterns shift | Models no longer match real-world dynamics |

| Lack of monitoring & alerts | Failures go unnoticed until the impact is severe |

| No retraining schedule | Performance steadily declines |

| Changing customer behavior | Recommendation/personalization becomes irrelevant |

| Evolving regulatory demands | Models become non-compliant |

| Relying solely on one-time training | Models become stale quickly |

As a result, enterprises often see declining accuracy, rising error rates, faulty automation decisions, customer dissatisfaction, and operational risk.

How Can Enterprises Mitigate This Challenge

1. Establish Continuous Monitoring and Performance Dashboards

Models should be monitored like critical business systems. Enterprises need dashboards that track:

- Accuracy and precision trends

- False positives and false negatives

- Drift patterns

- Latency and throughput

- Data quality anomalies

With automated alerts, teams can respond the moment degradation begins.

2. Implement Automated Drift Detection

Enterprises must detect:

- Data drift: change in input distributions

- Concept drift: change in outcome patterns

- Feature drift: a change in the relationships between variables

Techniques include KL divergence, PSI, statistical tests, and embedding comparisons for LLMs.

3. Create a Scheduled Retraining and Validation Cycle

Enterprises should adopt a retraining cadence, such as:

- Monthly for fast-changing industries like retail, fintech, customer support, etc.

- Quarterly for stable environments like manufacturing, logistics, etc.

Retraining must be paired with revalidation, smoke testing, and A/B testing before redeployment.

4. Use MLOps/LLMOps for End-to-End Lifecycle Reliability

A strong operational backbone includes CI/CD pipelines for ML, version control for models and datasets, automated model deployment, shadow testing and canary releases, feature stores and vector stores, and prompt management for LLM applications.

5. Align Business Inputs With Model Updates

Market shifts, seasonality, pricing updates, new customer segments, and regulatory changes should directly inform retraining cycles and feature engineering. AI teams must collaborate closely with business teams to ensure model assumptions stay current.

6. Run Post-Deployment Audits

Quarterly or semi-annual audits help identify bias, performance disparity, changing regulatory expectations, vulnerability or security risks, and model reliability under edge-case conditions.

Turning Enterprise AI Challenges Into Scalable Business Outcomes

Enterprises fail because the foundations, workflows, and integration layers required for AI success aren’t in place. Every challenge outlined above can be solved with the right engineering, governance, and organizational alignment.

RTS Labs helps enterprises move from scattered pilots and stalled initiatives to production-grade, high-ROI AI systems that scale confidently across the business.

Here’s how we turn challenges into measurable outcomes:

1. We Build AI on Top of Trusted, Unified Data

We establish modern data architectures for lakehouse, warehouse, pipelines, MDM, and observability so AI models run on clean, connected, governed data, which is the #1 predictor of AI success.

2. We Operationalize AI, Not Just Prototype It

Our MLOps and deployment frameworks ensure AI moves from PowerPoint to production with automated monitoring, drift detection, versioning, CI/CD, and retraining cycles that keep models accurate long-term.

3. We Integrate AI Across ERPs, CRMs, Core Workflows & Legacy Systems

AI is only valuable when it works where employees work. We connect AI to enterprise tools for seamless workflow adoption.

4. We Tie Every AI Initiative to ROI, KPIs & Measurable Business Impact

We prioritize initiatives based on cost savings, revenue uplift, efficiency gains, risk reduction, or customer outcomes, such that AI has a clear business owner and success metric. Read a few of our case studies here.

5. We Build Cross-Functional Alignment & Change Management From Day One

We help enterprises redesign workflows, train teams, and build AI fluency across the organization, eliminating resistance and ensuring real adoption.

6. We Protect Enterprises With Governance, Security & Compliance-First AI

We integrate AI standards such as regulatory mapping, explainability, and audit logs, such that AI meets the standards required in finance, healthcare, insurance, logistics, and other regulated environments.

7. We Keep Models Accurate in the Real World

With automated drift detection, retraining pipelines, model audits, and performance dashboards, we ensure AI systems stay reliable, relevant, and resilient over time.

Break Through AI Adoption Barriers With RTS Labs

Enterprise AI adoption is hard, but with the right engineering foundations, governance, and integration strategy, it becomes a transformative competitive advantage. RTS Labs helps enterprises break through adoption barriers and build AI that performs consistently, scales intelligently, and delivers measurable business impact.

If you’re ready to turn AI challenges into production-ready results, schedule an Enterprise AI Readiness & Adoption Workshop with RTS Labs. Talk to our AI Expert Now.

FAQs

1. Why do most enterprise AI initiatives fail to scale beyond pilots?

Most AI pilots fail because enterprises overlook foundational requirements, including clean data, integration pipelines, governance, and MLOps. Without these, AI cannot function reliably in real workflows, no matter how strong the model is.

2. How can enterprises measure whether they are truly ready for AI adoption?

Readiness depends on five pillars: unified data, cloud architecture, governance maturity, cross-functional ownership, and integration capability. A structured AI readiness assessment reveals gaps before expensive buildout begins.

3. Which internal teams should own AI adoption inside an enterprise?

Successful organizations assign joint ownership across data engineering, IT, and business units supported by an executive AI sponsor. AI fails when it sits only with data science teams or innovation labs.

4. How can enterprises prevent AI models from degrading over time?

Enterprises must implement drift monitoring, retraining pipelines, versioning, human-in-the-loop governance, and real-time performance dashboards. This is where MLOps maturity becomes critical.

5. How does RTS Labs help enterprises accelerate AI adoption?

RTS Labs integrates strategy, data engineering, model development, MLOps, governance, and workflow integration for enterprises to move from fragmented experiments to scalable, production-grade AI systems.